The use of Human Centered Design (HCD) principles in conducting a needs assessment

Introduction

Originating from the fields of ergonomics and engineering, human-centered design (HCD) was a technique used to enhance the design of management information systems and to enhance product development (Rubin, J., & Chisnell, D., 2011). Having evolved over time to feature considerably in computer science and artificial intelligence, HCD is favored for its capacity to consider the needs and circumstances of the user or participant in every step of a product or solution design. In monitoring, evaluation, research and learning (MERL) projects, understanding the needs and behaviors of beneficiaries is critical. HCD is thus a natural way to approach MERL projects for the best possible outcomes.

Within the MERL field, a needs assessment is a data collection process used to identify what an organization would require to get from its current state to an improved desired state. Understanding needs, at face value, seems like an inherently participant-centered process. However, as the author of this article, Mutsa Chinyamakobvu realized, conducting a needs assessment does not automatically translate to participant centricity. Reflecting on how she found herself repeatedly re-evaluating what the “users” in her case needed to gain from the implementation she would carry out, it became apparent that her desire to craft a perfect course may not have been to the optimal benefit of the participants.

The ways in which HCD and MERL methodologies overlap could be summarized as:

| HCD | MERL |

|---|---|

| HCD addresses implementation strategies using evidence-based methods. | MERL systems assess whether implementation strategies are achieving intended outcomes, and ensure learning from setbacks happens whenever they occur. |

| HCD seeks to close the longstanding research-to-practice gap1 (Lyon AR et al., 2020) and improve the usability of solutions (Hartson, 1998). | MERL seeks to improve outcomes and impact by identifying bottlenecks and misalignments between outputs and outcomes through periodic monitoring and evaluation of project outputs and outcomes. |

Purpose of this Case Study

This case study will explore the implementation of an HCD approach to conducting a needs assessment of teaching staff at a school in Uganda. Having been commissioned to give a short course on E-Learning methodologies and opportunities by Professors without Borders, Mutsa conducted a needs assessment as a prelude to designing a relevant course with course objectives that would meet the needs of the organization.

This article will discuss the context of the particular case that inspired this research on the synergies between HCD and MERL, the unstructured methods used to assess needs, and how HCD could have been better incorporated.

Key Takeaways

- A multi-faceted unstructured data collection approach to conducting a needs assessment can elicit broad and relevant data about your users that, though comprehensive, may need to still be processed extensively before use

- Carefully and minutely considering the setting in which an implementation will be utilized is a useful technique to center your immediate users effectively

Context

In August 2022, a group of lecturers volunteering for Professors Without Borders were welcomed to a rural school in Uganda to give a short course to the teaching staff on the “Opportunities of 21st-Century E-Learning Methodologies”. The volunteers had little knowledge of how the teaching staff were delivering their classes at the time. Due to connectivity issues, it was nearly impossible to establish any details to prepare for the two-week course. Whenever a communication channel was present, it was also clear that hearing and understanding one another was going to be a barrier to consider mindfully when choosing an approach to deliver the course.

Uganda Rural Development Training (URDT) Girls’ School, is an all girl’s school that was established in 2000 in Kagadi district in Western Uganda. The school was instituted to “fill the need for education that is linked to development and women empowerment”. Their pedagogical approach is to employ the 2-generation approach, where parents learn along with their children to “develop a shared vision for their home, analyze their current situation, apply systems thinking, team learning, plan together and learn new skills”.

The students are viewed as change agents in their home and in the community, as they are encouraged to study while also being equipped through “training to generate sustainable income, health, family cooperation, and peace at home”. This education is transferred back at home through various projects to uplift the family economically, and it is transferred through educative theater, workshops and radio programmes - all which results in students as young as seventeen years old being able to attest that they have managed to build their family a house from revenue they are getting from family farming projects, for example. In contrast to other schools, URDT Girls’ School aims to produce “functionally literate, principled, entrepreneurial and responsible citizens”.

It was probable to the author that cultural differences between how URDT teaching staff and the visiting lecturers engaged, taught, learned, communicated, and prioritized activities - made a typical data collection process for the needs inadequate. Often, assessment data collection tools consist of online or paper surveys and interviews that are designed in light of the existing available data. Obtaining available data was a substantial challenge in an agriculture-focused institution where teaching, assessment and communication methods did not prioritize written accounts or data storage in ways common to the visiting lecturers. The teaching staff’s experience with tech tools was non-uniform and sparse. Their teaching methodology was one that prioritized group discussions and practical hands-on work to enable swift rural transformation. As such, it was decided to run focus group interviews in order to get as much information from the variation of responses given, and account for the gaps in hearing and understanding that had been identified earlier in the interactions of the volunteer lecturers and URDT staff.

Unstructured data collection methods to assess needs

The first round of a focus group data collection exercise consisted of an adapted closed card sorting activity. In small groups of three to four, the teaching staff would stick up post-it notes of:

- what e-learning tools or methods they had used before, and

- what they hoped to gain from the course.

The resulting data showed that they already knew about many of the common approaches: Zoom, Learning Management Systems, online assessments, and other multi-media tools to encourage attendance and participation. However, their responses to what they hoped to gain from the course were vague - they listed the same tools without elaborating in what ways they wanted to improve on them. The scope of the words “tools you have used before” had not been clarified to exclude single-use experiences, and time spent in the lab later revealed that they knew of the tools but had little practice.

Since the depth of knowledge of e-learning methods was still not evident, the author partnered with a fellow visiting lecturer to run two parallel sessions with two separate objectives. One session was a facilitated fun physical game that required small groups to race to an endpoint while being guided by a team lead. The team lead knew the steps to the end but was not allowed to reveal this knowledge; they could only indicate whether you had taken the right or wrong step with each turn. Within the groups, members each took a turn trying to get to the end by retracing the successful steps of the previous member and guessing what the next step could be. The various groups also had to compete to be the first to get to the endpoint. This exercise was not necessarily related to e-learning, the objective of the exercise was to break the ice and later encourage the teaching staff to be as extensively forthcoming as they had been during the exercise. In the end, the teaching staff shared multiple ways they had tried to engage in e-learning methods and failed, through a highly engaged debrief round-table discussion. They also proactively discussed whose methods they were choosing to follow and why.

A session parallel to the fun exercise was later done in the computer lab, on the same day, where teaching staff had to create a new account on a blogging site to start a personal blog/online profile, and write short blog posts introducing themselves through a brief biography. This session was more challenging for the teaching staff than the visiting lecturers had anticipated, and shed light on where to begin with the e-learning course.

At this stage, the author finally felt she had enough knowledge to run a survey and ask more informed questions about different kinds of tools they may recognize, the scope of usage, student engagement with chosen tools, and their own chosen alternatives to difficult learning leaps in e-teaching methods. To elicit all this, she ran a survey administered through Google forms as a third and final data-collection exercise to determine the details of what we would spend the second week of our time together learning. After setting everyone up with a gmail account, the 30-question survey took only an hour to complete together, with support where needed.

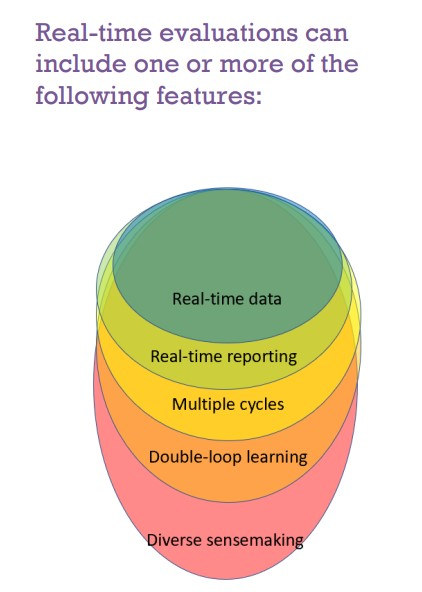

The conducting of a needs assessment that the author had thought would only take a day, had taken an entire week. The week focused on getting oriented to the teaching staff’s ways of engagement and eliciting their requirements for the e-learning course, by employing various real-time evaluation techniques such as impromptu interviews, focus groups and constant observations. The observations from each day informed the course of the next, and the evaluation continued until there was sufficient information to design the required learning materials. Patricia Rogers (Better Evaluation, 2020) aptly describes real-time evaluation as a combination of the following factors: real-time data, real-time reporting, multiple cycles, double loop learning and diverse sensemaking.

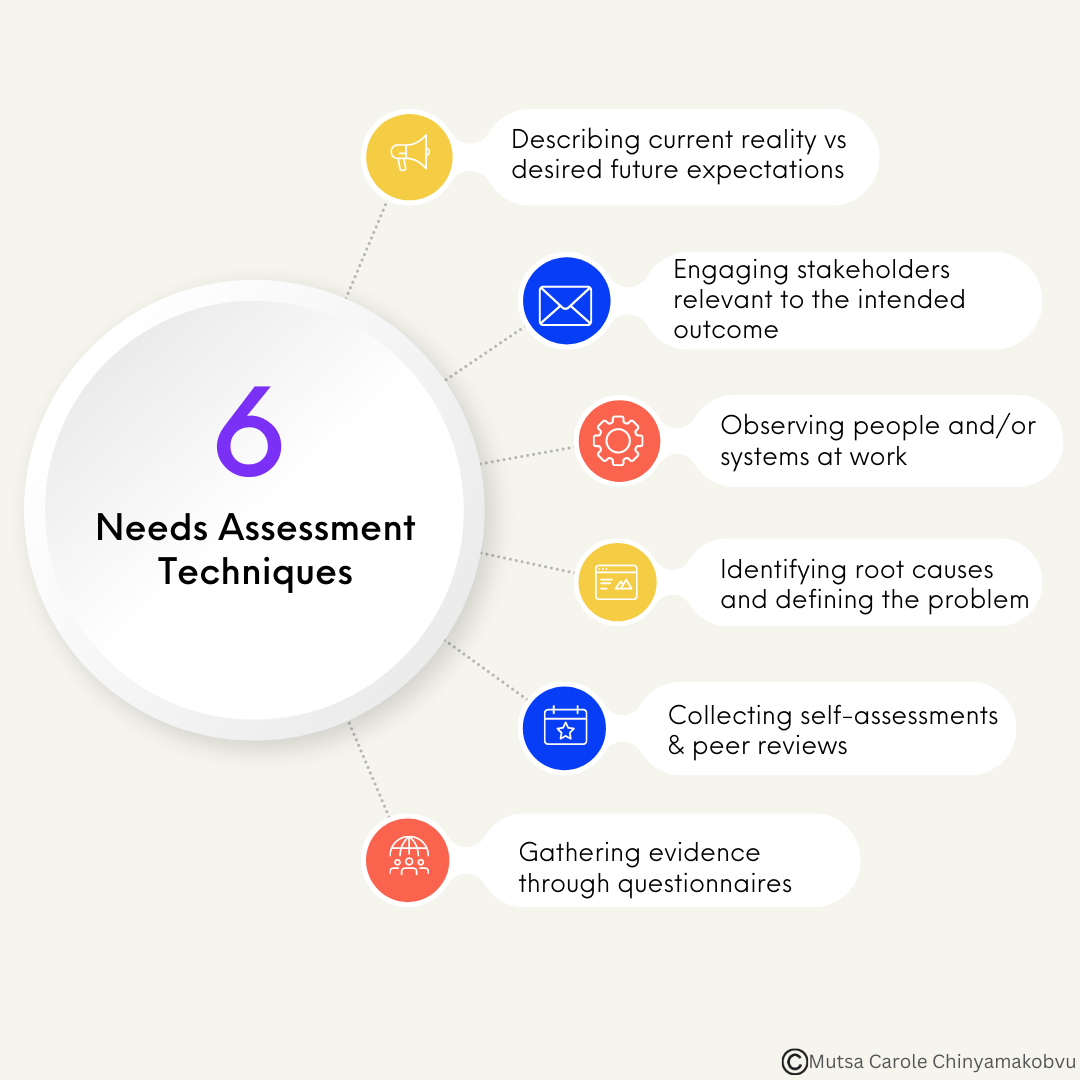

The needs assessment process can consist of mixed iterations of the following techniques: describing current reality vs. desired future expectations, engaging stakeholders relevant to the intended outcome, observing people and/or systems at work, identifying root causes and defining the problem, collecting self-assessments & peer reviews, and gathering evidence through questionnaires.

Many of these steps were employed in various ways and sequences in order to determine the objectives, content, and structure of the e-learning methods course to be given to the teaching staff for the remainder of the time spent at URDT.

How we incorporated HCD

The MERL Center definition of HCD is as follows:

“Human-centered design (HCD) is a framework that places the needs, desires and behaviors of key stakeholders, beneficiaries, users or teaching staff at the center of design and implementation decisions. HCD can be used to create and inform digital products, physical products, programs and communities. It can rely on iterative cycles of co-creating, collaborating, testing and refining solutions. HCD requires contextual analysis and understanding in order to base design decisions on how stakeholders or end-users already think, communicate and engage. In MERL, HCD can be used to inform who is asked what, when and how. It can provide insights on why something is happening. In using HCD for MERL, it is important to consider human rights and equitable solutions.”

In an attempt to align with this definition, it is necessary to note that, not only was it important to figure out what the teaching staff needed in order to continue teaching when students are not physically at school, it was also important to figure out how to elicit accurate and relevant information for an effective course delivery.

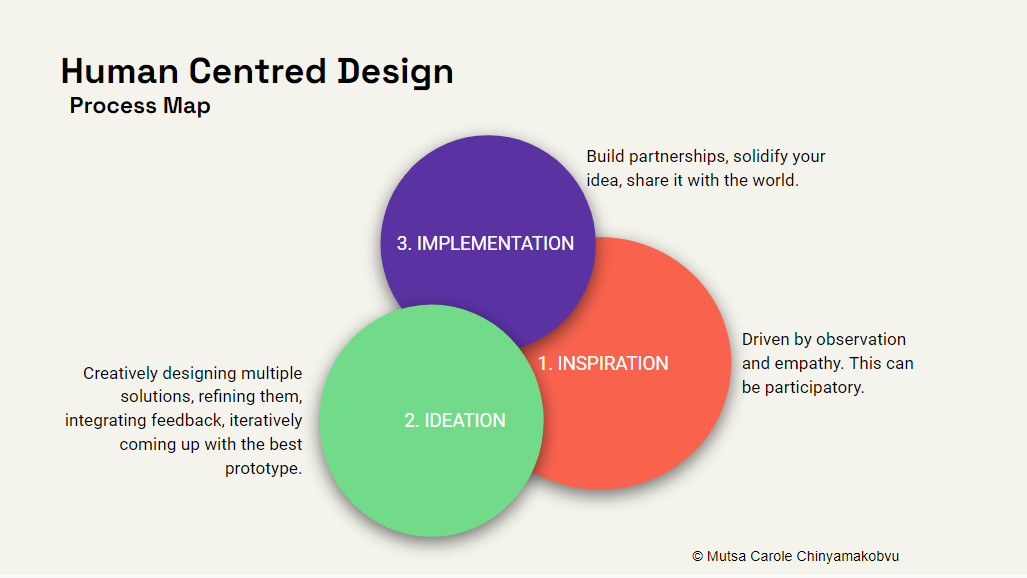

While undergoing the rapid and real-time evaluation process of the current situation, HCD principles were employed to inform an effective solution as per the HCD Process Map which is carried out in the following steps:

- Inspiration - Driven by observation and empathy. This can be participatory.

- Ideation - Creatively designing multiple solutions, refining them, integrating feedback, iteratively coming up with the best prototype.

- Implementation - Build partnerships, solidify your idea, share it with the world.

The participatory facilitation techniques to collect data were rooted in the need to find the appropriate inspiration for learning design that would speak coherently to the recipients of the course ultimately designed in the ideation phase and ultimately implemented. At the end of each day, the process of reconciling the day’s observations involved reviewing and analyzing the feedback and adapting the drafted course to fit the new narratives that were emanating with each set of findings. A couple of times, the ideas of how the course should be delivered were completely discarded for a new version relevant to the needs identified as priority requirements for an e-learning pivot. Documenting this experience for those who had commissioned this work was a critical step to ensuring that the implementation of this annual partnership could continue to improve in effectiveness and impact.

Raphael Graser (SSWM) more personably denotes the steps from Inspiration > Ideation > Implementation as “Hear > Create > Deliver”. The Needs Assessment process lends itself only to the first stage of this three-step process. The repetitive real-time evaluation process kept going all week because the author wanted to accurately “hear” what the needs were.

How HCD could have been incorporated better

Had the author more accurately anticipated how the lack of contextual awareness would need to be tactfully and rapidly redeemed, she may have been able to better prepare the data collection process in a more structured format with clear objectives. The explorative and unstructured approach may have been the reason it took an entire week to elicit needs, out of the two weeks assigned at URDT. Though a week is not necessarily a long time to conduct a needs assessment, it would have been more ideal to solidify an approach much sooner and deliver the course over the remainder of the two weeks. The needs assessment objectives were very broad, the author wanted to explore and understand both the internal structures of the organization, and the external circumstances in which they operate.

An improvement to consider can be drawn from the consideration of the “setting” in which the implementation is to be utilized as elaborated by Lyon AR et al., (2020). The setting is considered one of the determinants of which HCD techniques may be applied (Lyon AR et al., 2020).

According to Damschroder LJ et al., (2009), outer settings are considered to be settings where the implementation will be utilized externally to benefit the clients or customers of the organization. What the client needs, as well as the barriers and facilitators to meet those needs, would be more accurately known and prioritized by the organization itself. Inner settings refer to the internal structures governing how an organization operates, and the complexities inherent to those structures. HCD techniques thus have to take into consideration the setting in which the implementation will be utilized to be more effectively incorporated.

If the author had rightfully identified the setting of the implementation as an “inner” setting (one that ought to enhance internal operations of the organization), she would have conducted a data collection exercise akin to a field visit and obtained all the information needed from observation of the teaching staff in action. The further attempt to account for the outer setting (i.e the students) ahead of what the course was directly addressing (i.e how the teaching staff would design and deliver courses using e-learning methods), cost the author the opportunity for a more comprehensive e-learning course. It may have been sufficient to consider the context of the teaching staff as minutely as possible, and trust that spending more time equipping them would prepare them well enough for any adaptations needed for their students.

Critiques notwithstanding, the success of the resulting implementation which can be characterised by how engaged the teaching staff were during the course, their willingness to explore beyond the immediate scope of each day’s topic, and their immediate adoption of some of the methodologies presented; was all likely due to the visible extensive efforts to center them in the design of the resulting course.

As learned through this experience and the research that followed, the scope of HCD approaches and how they can be utilized is broad, and the research in this area has much to lend to the field of MERL regarding “how” we can collect data and “how” we can implement solutions effectively.

— References —

- Rubin, J., & Chisnell, D. (2011). Handbook of Usability Testing: How to Plan, Design, and Conduct Effective Tests. John Wiley & Sons.

- Bridging the Research to Practice Gap - Innovative research methods and dissemination practices are leading the way. Psychology Today (2016) [Organisation website]

- Lyon AR et al., (2020). Leveraging Human-Centered Design to Implement Modern Psychological Science: Return on an Early Investment. Am Psychol, 75(8), 1067-1079 [National Library of Medicine]

- Hartson HR, (1998). Human-computer interaction: Interdisciplinary roots and trends. Journal of Systems and Software, 43, 103–118. [CrossRef] [Google Scholar]

- Rogers P, (2020). Monitoring and Evaluation for Adaptive Management. Working Paper Series Number 4. Better Evaluation [Free article]

- Human-Centered Design definition,(2023). MERL Centre [Organisation Website]

- Graser R. (2020) Human-Centered Design. Sustainable Sanitation and Water Management [Organisation website]

- Damschroder LJ et al., (2009). Fostering implementation of health services research findings into practice: A consolidated framework for advancing implementation science. Implementation Science, 4(1), 50 10.1186/1748-5908-4-50 [PMC free article]

-

The research-to-practice gap is an ongoing issue in the evidence-based movement that began in the medical field in the early 1990s, where the practical implementation of research is low and therefore unsuccessful due to “many proximal factors such as inadequate practitioner training, a poor fit between treatment requirements and existing organizational structures, insufficient administrative support, and practitioner resistance to change” (Psychology Today, 2016) ↩